Blog

How to prevent your fraud defenses from leaking data to adversarial AI models

Each day, the Spec platform stops millions of attacks that could otherwise bypass fraud defenses by leveraging signals harvested for the use of adversarial AI models. Our platform deploys solutions to blind and mislead the AI in order to protect merchants, marketplaces, and their users from being defrauded. We put together a quick primer about the ways we’re seeing cybercriminals use artificial intelligence to bypass traditional fraud defense technology.

What is an adversarial AI model?

AI models need to “train” on data to tune their accuracy. Adversarial models used by fraud attack tools are primarily concerned with determining when they’ve been detected in order to automatically adjust their attacks and evade capture. There are a number of signals these models consume to evolve their performance – two major ones we’ll talk about in this primer are:

- Did the attack get blocked or redirected?

- How long did the site take to process a response for the attack?

This is incredibly important data for these attack models to use when building fraud defense countermeasures, and a vast majority of websites and applications give this data to their attacker’s models freely.

Merchants, marketplaces, and fintechs that use Spec don’t give this data up to adversarial models. Here’s a glimpse into how.

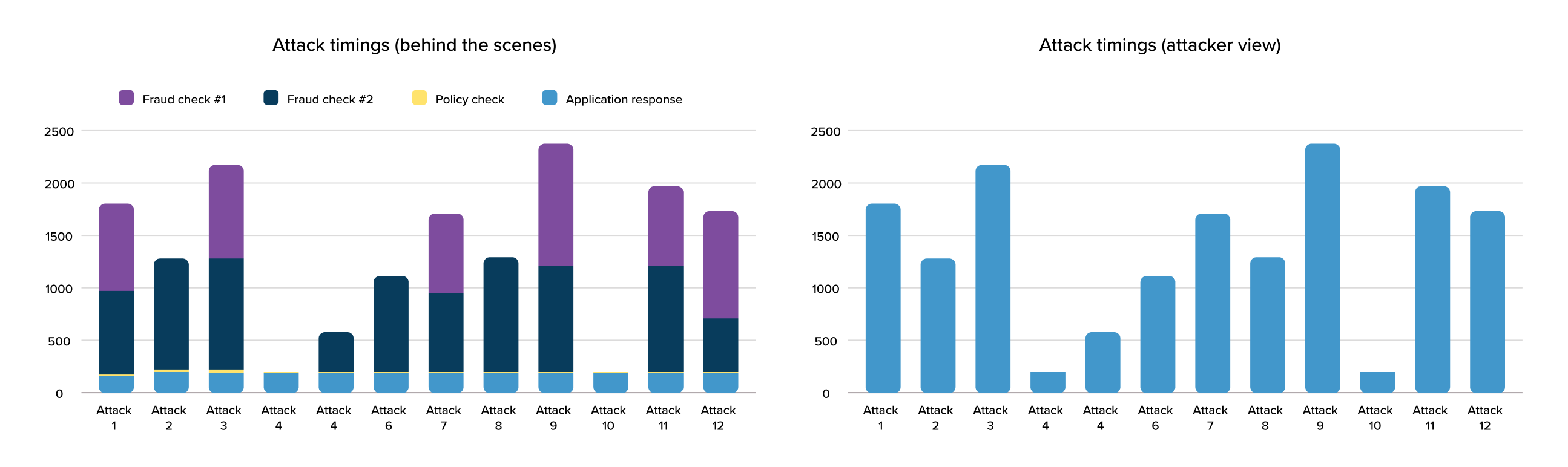

Attackers time your defenses, so your defenses should be too fast to time.

In virtually all fraud platforms, online risk checks are made at the time of request, impacting page load time in a way that’s detectable to the attacker. This allows the attacker to infer which of their attacks trigger more risk checks, or risk checks that take longer to process. By profiling millions of these requests, attackers can model which attack behaviors blend in with a merchant’s good user traffic. Like picking a lock, these tools use this resistance to shape their attacks to bypass a merchant’s fraud defenses, looking for the right way to form requests that yield a response that is identical in processing time to a good user interaction.

Unlike other platforms, the Spec platform orchestrates fraud checks quickly and invisibly to the end user. By managing continuous risk checks with parallel network calls optimized for speed, our platform can completely remove latency from the equation and blind adversarial models to how much processing is happening to determine risk. This strips adversarial AI models of the critical feedback signals they need to model more effective attacks. As an added benefit, pages load are faster, which everyone is generally happy about.

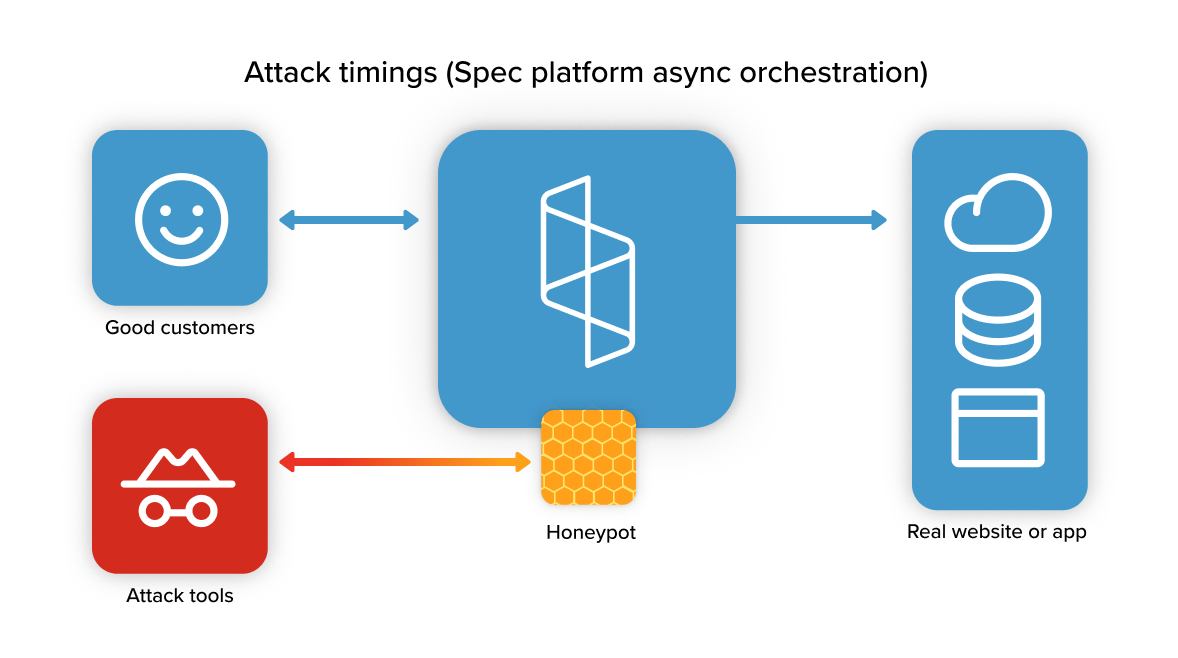

Don’t let attackers know you’ve detected them.

Conventionally, attack traffic is either blocked or diverted to a challenge experience. This has long been considered a best practice, as the thinking was that blocking an attacker would encourage them to spend their time attacking another site. Unfortunately, this creates training data for adversarial models attempting to learn how to bypass your defenses.

The Spec platform offers a honeypot feature that responds to attack requests with a flawless replica of your actual application that’s designed to mislead and confuse an adversarial model. This does three things:

- Introduces deliberate errors into an adversarial model’s training data.

- Dramatically lowers the test population of genuine application responses an adversarial model can use to train itself with.

- Harvests compromised identity data that the attacker is in possession of and may use in future attacks.

We’re looking forward to sharing more as we release new platform features to orchestrate defenses against advanced attacks that leverage adversarial AI models. Reach out today and see a demo of the Spec platform in action.