The Agentic Internet Is Already Here

About 64% of customer journeys we see at Spec are driven by agents, bots, or scrapers. The internet, by and large, is no longer for humans.

Of the agents we see, over 98% of them are “ghost agents” that pose as humans. While a growing number of these are good purchasers, they only make up about 2% of the good purchases we see across our network today. A vast majority of today’s agents are maliciously uncovering, exploiting, and automating abuse methods against merchants, banks, and marketplaces.

The defensive model has changed. It once was that security teams built systems to detect scripted automation, headless browsers, credential stuffing, card testing, and large-scale account creation. The model was clear: identify abusive automation, block it, and tune detection as attackers adjusted.

That model worked when automation was noisy, repetitive, and relatively unsophisticated, but it’s no longer sufficient.

The internet is entering a new phase in which autonomous AI agents pursue complex goals, adapt behavior, and coordinate activity across longer periods of time. These agents are not just faster bots. They’re systems capable of decision-making, persistence, strategy, and self-adaptation.

This shift changes the detection problem at a structural level.

The Bot & Fraud Era Was About Scaling Abuse

The driver for bots was the speed and scale they could operate on. Unrestrained by the limitations of a human using a mobile device or web browser, bots could act first to snatch up inventory and automate simple, tedious processes. This interaction style generated obvious signals like high request rates, failed JavaScript execution, unusual device fingerprints, and repeated patterns across sessions.

Detection systems were designed to identify those signals. Rate limiting, client-side instrumentation, browser fingerprinting, and heuristic scoring became standard practice. Even as attacker frameworks improved, they still left mechanical traces.

In the bot & fraud era, automation was the defining characteristic. If traffic looked automated, it was likely malicious.

That assumption allowed security teams to reduce risk by detecting non-human behavior and blocking anything that wasn’t beneficial to their business.

The Agent Era Is About Objectives

AI agents introduce a different dynamic.

An agent doesn’t simply execute a static script. It can evaluate context, interpret responses, and adjust its approach. It can slow down if activity appears suspicious and distribute actions across accounts and time periods. It can also vary its interaction patterns to resemble legitimate users.

Most importantly, it’s capable of long-running thinking and experimentation in the pursuit of a defined objective.

Put simply, it will learn a business’s defenses and how to bypass them.

That objective might be building a network of synthetic accounts, exploiting promotional incentives, testing stolen credentials over time, or probing transaction flows for weaknesses. The agent is optimizing for success, and in abusive use cases that is extracting value from the business they are pointed at.

This distinction matters because detection systems trained to identify mechanical repetition will struggle against adaptive coordination. Goal-oriented abuse changes signals when it figures out that the signals it generates is keeping it from achieving its goal.

The Classification Model Has Expanded

Many existing systems still operate on a binary distinction between human and bot. Traffic is classified as legitimate or automated. Risk scoring is built around that divide.

In an agentic environment, that binary framework becomes limiting.

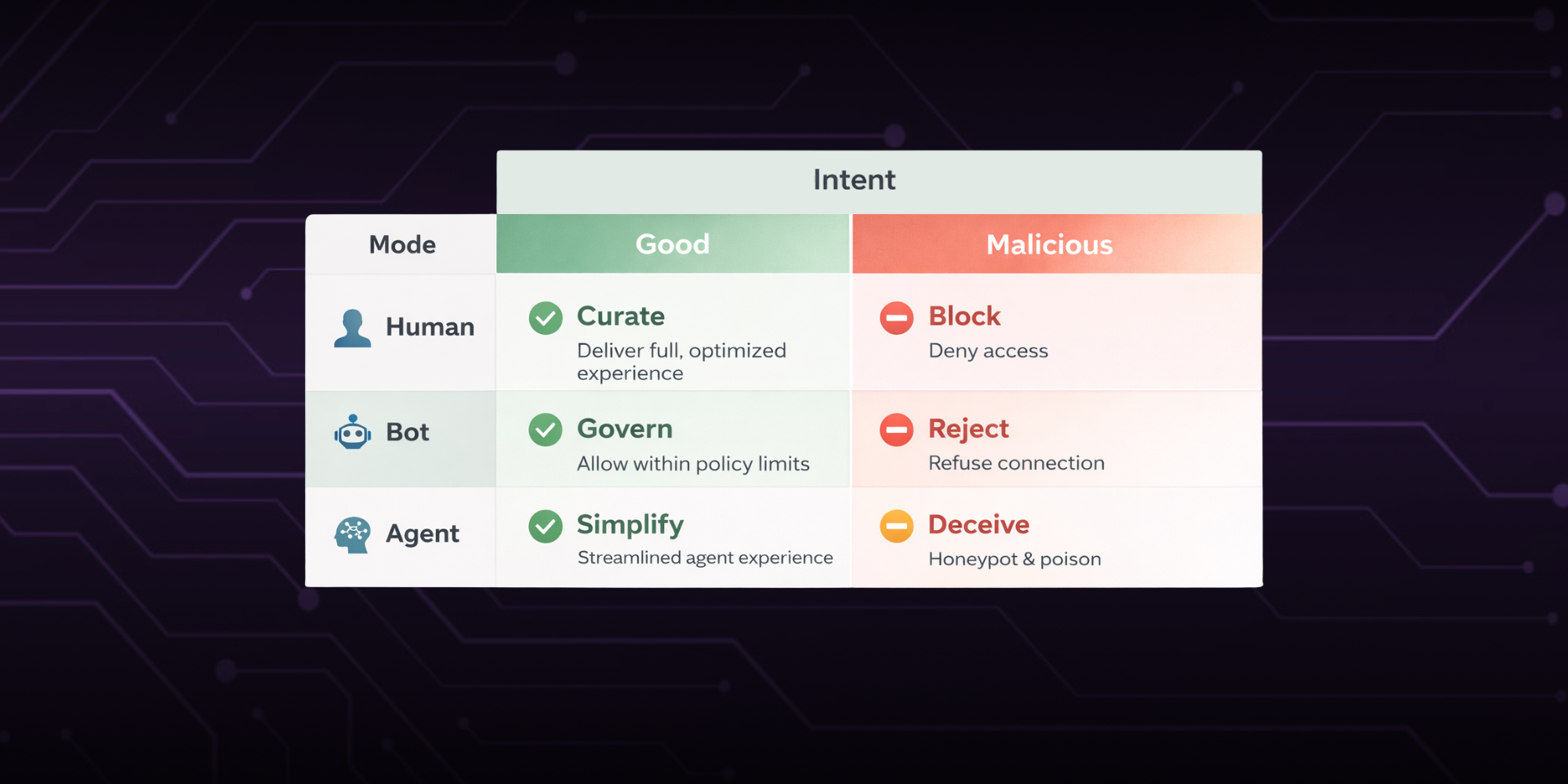

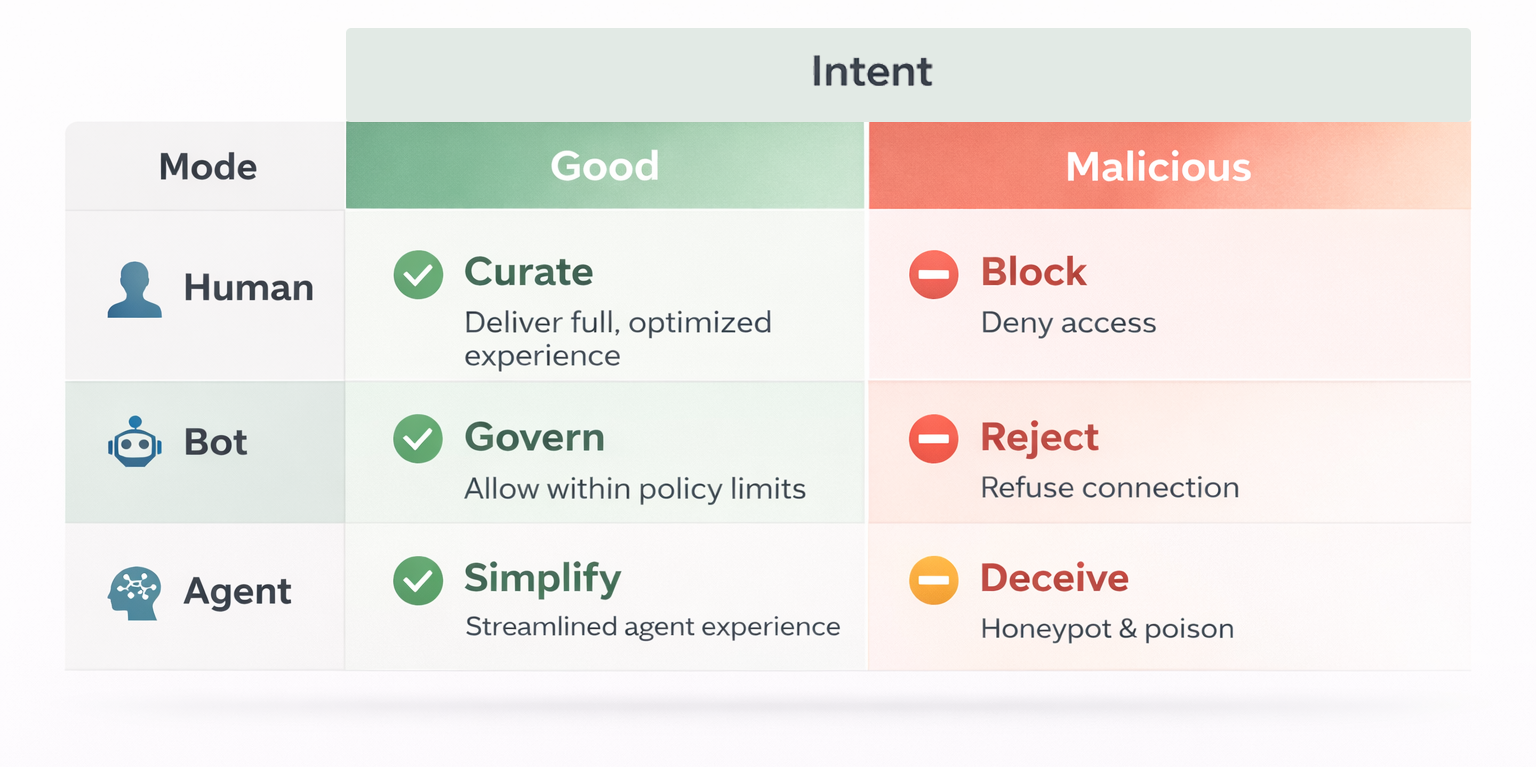

There is now a three-by-two matrix that matters

The mode – humans, bots, or agents – combined with their intent – good or malicious.

Intent matters the most.

Intent classifies if a visitor is trying to engage with your business or extract value from it. Remember: a non-trivial percentage of agentic visitors are responsible for real good purchases today, and that number is only expected to grow. No business leader is trying to discriminate against good agentic purchases.

The mode matters because it tells you how to interact with each intent + mode combo:

- Good agents should be guided to a structured agentic purchase flow that’s impossible to misinterpret.

- Good humans should be protected through a thoughtfully crafted purchase journey.

- Good bots should be monitored.

Meanwhile, for the different modes of malicious intent:

- Malicious agents need to be artfully misdirected, honeypotted, and poisoned.

- Malicious humans must be denied access to trusted customer flows.

- Malicious bots should be blocked.

Detection is no longer just about spotting automation. It’s about accurately classifying entities and understanding intent.

Why Client-Side Detection Becomes Fragile

A significant portion of bot detection today depends on client-side instrumentation, particularly JavaScript execution and browser-level signals. The logic is straightforward. Real users execute browser code naturally. Bots often fail or expose inconsistencies.

This approach assumes that detection signals can rely on cooperation from the client environment. In an adversarial setting, nothing on the client-side can be assumed safe.

Client-side code can be blocked, modified, or emulated. Automation frameworks increasingly replicate legitimate browser behavior. As AI agents improve, they can adapt to client-side checks dynamically.

Any signal that can be suppressed or manipulated by the adversary introduces structural fragility.

Durable detection must minimize dependence on signals that attackers can easily interfere with. Systems that rely heavily on client-side data collection will face increasing evasion as agent tooling matures.

The Limitations of Transaction-Based Risk

Another structural limitation of many current systems is their focus on isolated events. Risk is often evaluated at the level of a login attempt, a checkout session, or a single transaction.

Agents distribute risk intentionally.

They create accounts gradually, build trust signals over time, and interact in ways that appear normal within each session. They also coordinate across multiple identities to reduce the visibility of any single account.

Viewed individually, these transactions may not trigger alerts. The suspicious behavior emerges only when behavior and connections are drawn across journeys.

If identity is defined narrowly at the transaction or device level, coordinated activity can remain hidden.

In an agentic internet, identity must reflect persistence across time and behavior across touchpoints. It must link entities in ways that reveal coordination rather than just scoring isolated actions.

The Economic Incentives Are Changing

The rise of AI agents is an economic evolution as well as a technical one.

Agentic AI has lowered the barrier to building adaptive systems, which means tooling is becoming more accessible because the human intelligence required to build it has fallen sharply. Off-the-shelf components allow attackers to integrate decision-making and automation without building everything from scratch.

This reduces the cost of sophisticated abuse.

When the cost of coordination drops, more actors can deploy strategic attacks. Promotion abuse networks become easier to operate. Synthetic identity farms can be managed with greater precision. Account takeover attempts can be tuned in real time.

As capability increases and cost decreases, the volume and sophistication of agent-driven abuse will grow.

Security systems built for the economics of the bot era will struggle in the economics of the agent era.

The Operational Impact on Fraud Teams

Fraud and risk teams are already under pressure to balance loss reduction with customer experience.

Agent-driven abuse complicates this further.

When abuse looks human at the session level, blunt defenses become more tempting. Tighter thresholds, additional verification steps, and aggressive blocking can reduce risk in the short term, but they also increase friction for legitimate users.

Without a deeper identity layer, teams are forced to choose between tolerating sophisticated abuse and overcorrecting with user friction. When business models change to evade risk, customer acquisition and lifetime value can contract - leading businesses to drive more customers to manual reviews in order to keep their doors open as wide as possible.

Where the Impact Is Emerging First

Marketplaces and financial institutions are among the earliest sectors to experience these pressures.

Marketplaces are seeing coordinated account farming, incentive abuse, and synthetic seller networks that are maintained with increasing sophistication. Individual accounts often appear legitimate. The underlying coordination becomes clear only when activity is mapped across journeys.

Financial institutions face automated synthetic identity creation, agent-assisted account takeover, distributed transaction testing, and AI-supported social engineering. Again, single interactions may look normal, but the broader pattern reveals intent.

In both environments, risk is less about obvious spikes in traffic and more about subtle orchestration.

What an Evolved Detection Model Requires

An agentic-ready web surface must be able to classify humans, bots, and agents with greater accuracy to reduce reliance on fragile client-side dependencies. It must build identity across the full journey rather than at the session level and surface coordination and network behavior, and it must accurately uncover intent by analyzing visitor interactions in real time.

This requires architectural choices that prioritize persistence, linkage, and server-side observability.

It also requires acknowledging that automation is no longer the only defining signal of abuse. Adaptive behavior and goal alignment matter just as much.

Organizations that continue to optimize solely for bot detection will find themselves tuning controls for a problem that is no longer dominant.

A Structural Shift

The internet is becoming agentic. Autonomous systems – both good and malicious – are participating in transactions, interactions, and workflows at increasing scale. That participation will continue to expand.

This is a structural evolution in how activity is generated and coordinated online. Detection strategies must evolve accordingly.

The earlier organizations adapt their models to this reality, the better positioned they will be to manage risk without sacrificing user experience.

The agentic internet is already here.

Ready to get started with Spec?

Nate Kharrl, CEO and co-founder at Spec, has built leading solutions for application security and fraud challenges since the early days of the cloud era. Drawing from his cyber experience at Akamai, ThreatMetrix, and eBay, Nate helped found Spec to focus on the needs of businesses operating in a landscape of increasing AI risks. Under Nate’s leadership, Spec grew from its mid-pandemic founding to raise $30M in venture-backed funding to build solutions used by Fortune 500 companies transacting billions in online commerce. Spec’s service offerings today include protective measures for websites and APIs that specialize in defending against attacks designed to bypass bot defenses and risk assessment platforms.