Why JavaScript-Based Defenses Are Brittle

Client-side JavaScript became the default for modern detection stacks because it was convenient. You could instrument the browser, collect device and interaction signals, and score risk before a transaction completed. If the browser behaved strangely, that was suspicious. If it executed code cleanly, that was reassuring.

That model assumed the browser environment is trustworthy enough to measure.

In an agentic internet, that assumption no longer holds.

The Status Quo Was Built for a Different Problem

Client-side defenses were designed for an era where abuse was defined by visible automation.

But the internet has shifted. A significant portion of traffic is now generated by autonomous systems pursuing objectives, not just executing scripts.

These systems are not merely trying to get through a page. They’re optimizing toward goals:

- Build synthetic account networks

- Extract promotional value over time

- Test and refine transaction flows

- Probe defenses iteratively

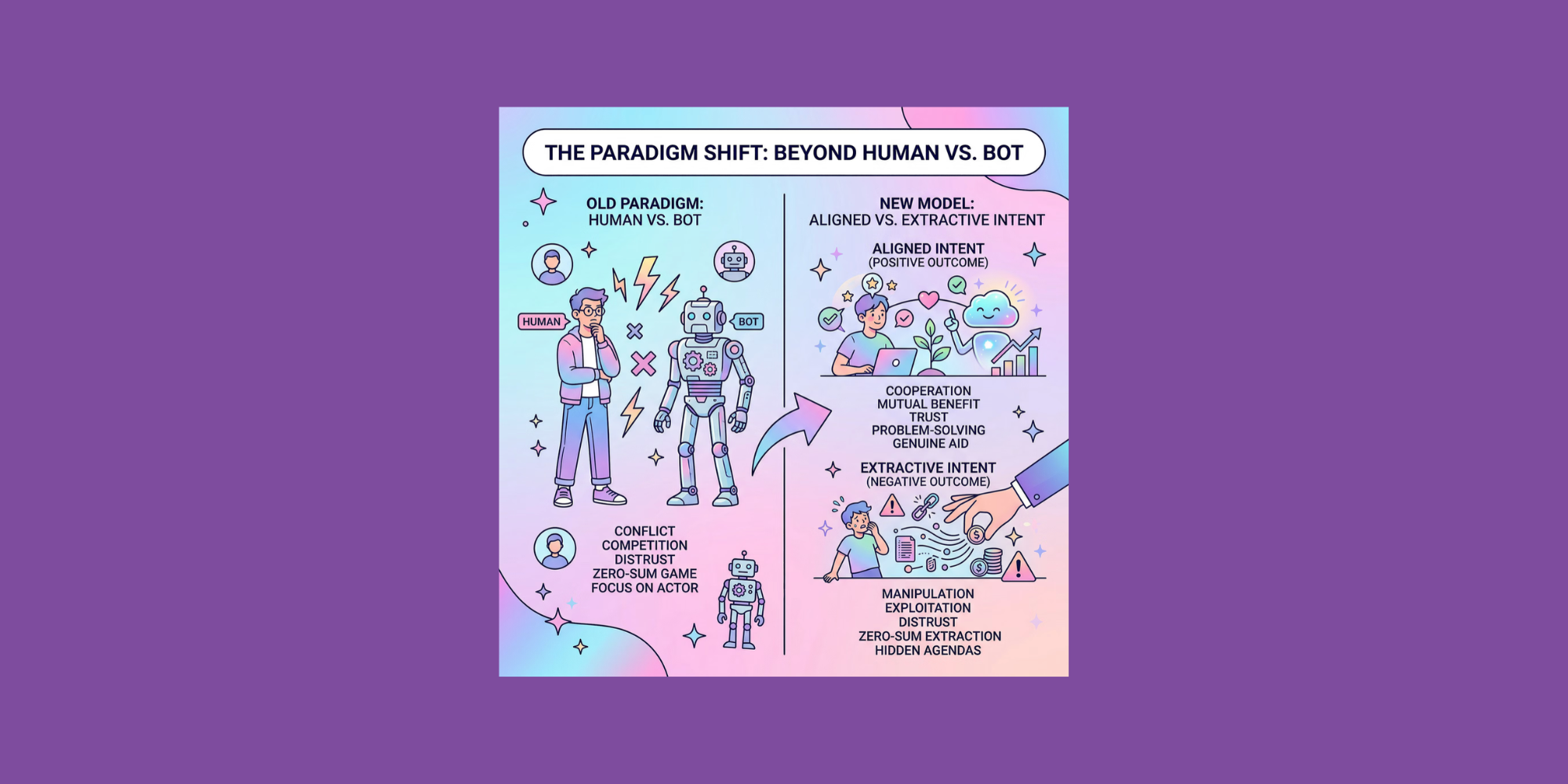

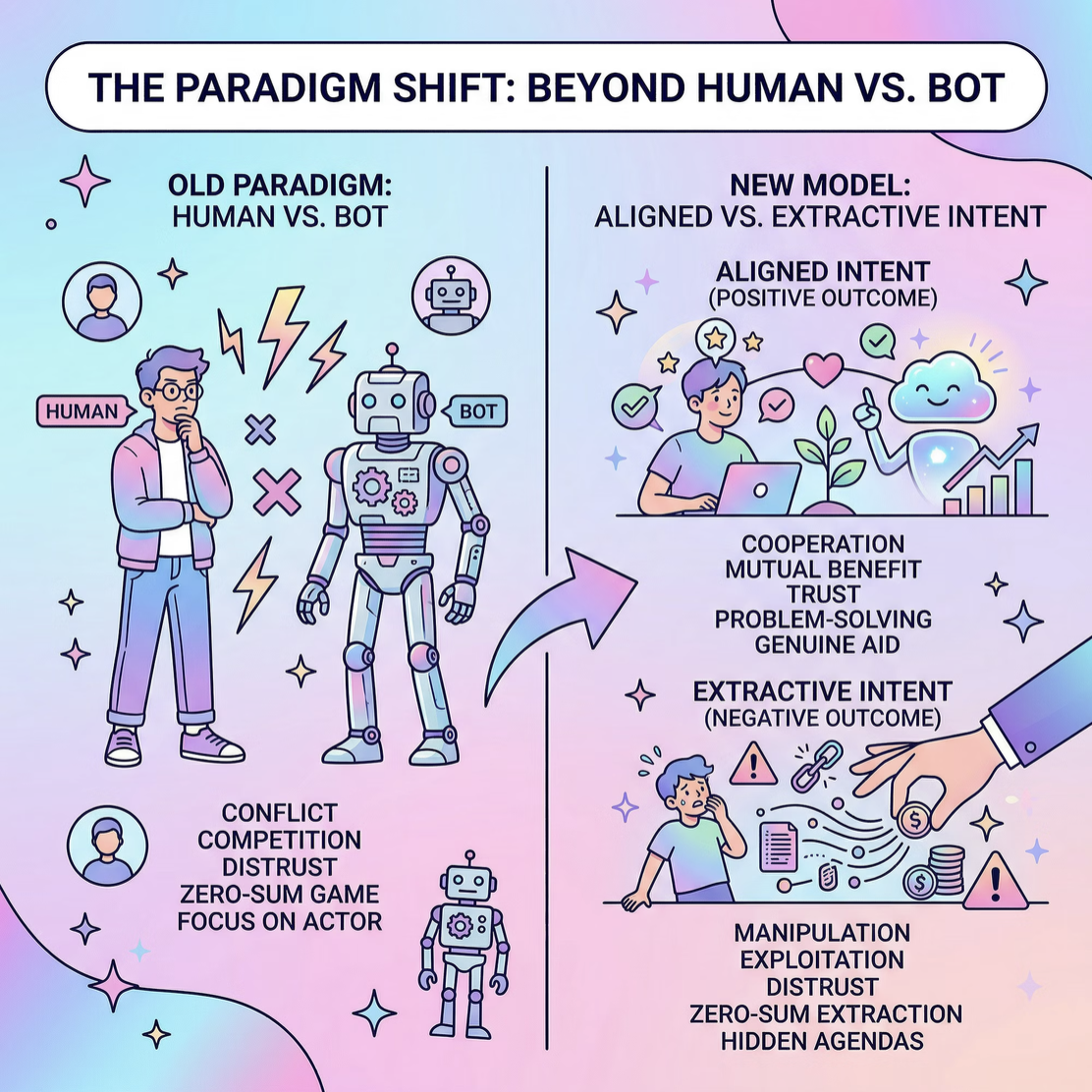

When behavior is goal-oriented and adaptive, the detection problem changes to whether the entity behind the session is aligned with your business or extracting value from it.

Client-Side JavaScript Assumes Cooperation

Every client-side system shares a structural weakness. It depends on signals returned from an environment controlled by the visitor.

That environment can:

- Block scripts

- Modify outputs

- Emulate expected behaviors

- Replay sanitized signals

When detection depends on what happens in the browser, it depends on something the adversary can interfere with. In an agentic environment, that fragility compounds.

An adaptive agent can:

- Experiment with different execution paths

- Detect when friction increases

- Adjust interaction patterns

- Suppress or simulate signals strategically

If JavaScript execution increases scrutiny, the agent learns that. If certain browser attributes trigger risk, the agent routes around them.

Client-side detection becomes something to optimize against.

Execution Is Not Intent

When JavaScript runs perfectly, you learn that a browser-like environment executed your code. You haven’t learned:

- Whether the entity is acting independently or as part of a coordinated network

- Whether it is building long-term abuse infrastructure

- Whether it is optimizing toward extraction rather than engagement

- Whether this session connects to a broader pattern

Client-side instrumentation is session-bound and surface-level, while intent is cross-session and emerges from persistence and coordination.

In an agentic internet, entities can behave normally within any single interaction. The abnormality appears only when you analyze behavior across journeys and over time.

Agents Disrupt the Old Detection Boundary

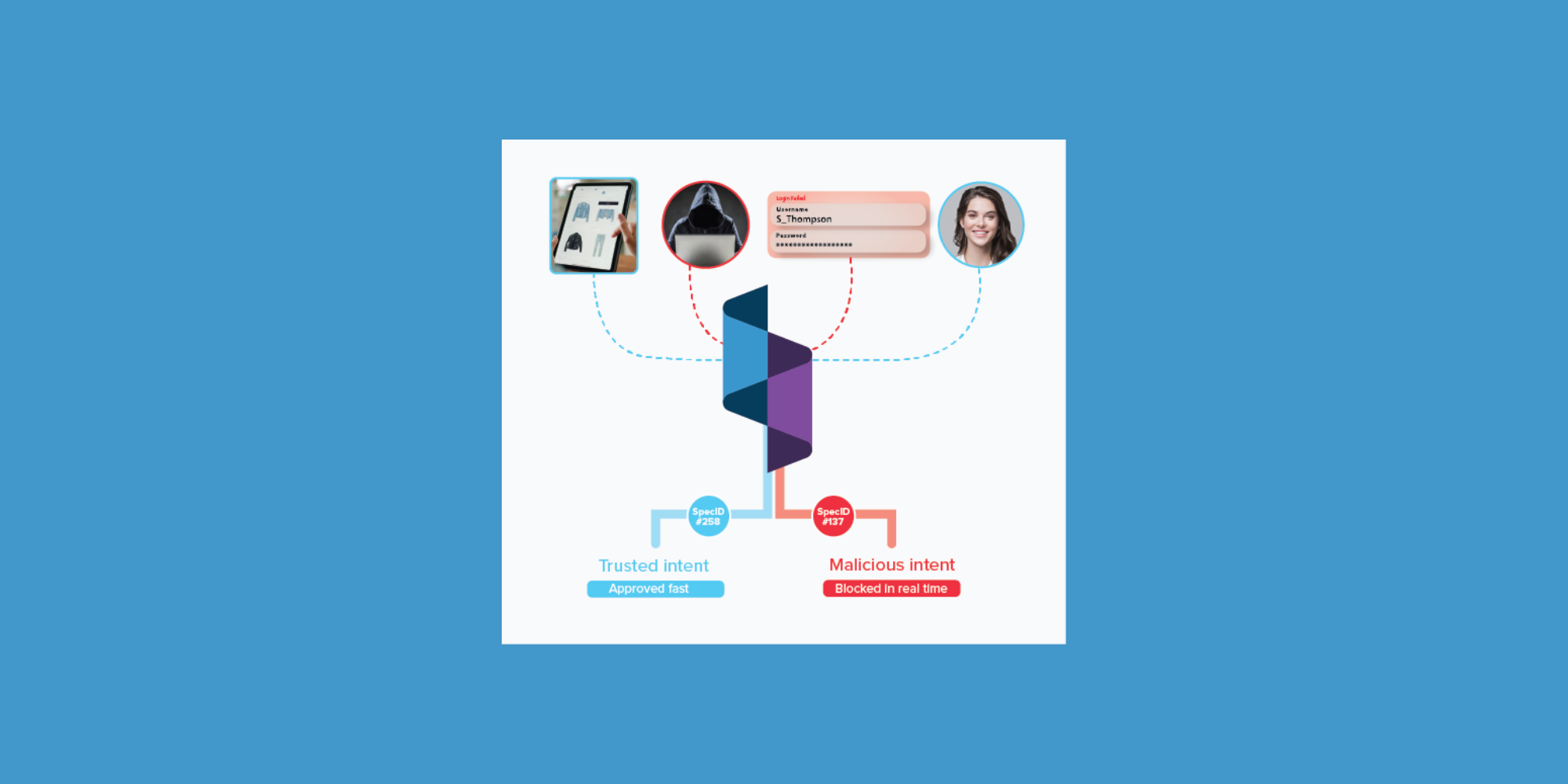

The historical boundary was human versus bot. Today the meaningful boundary is aligned intent versus extractive intent.

Some agents generate legitimate purchases, while others orchestrate abuse. Both can execute JavaScript, mimic human pacing, and look clean at the session level.

The more adaptive agents become, the less meaningful execution-based signals are. The detection problem shifts upward, from surface behavior to objective alignment.

Durable Detection Lives Outside the Client

Signals that live in adversary-controlled environments are inherently brittle. Durable detection requires:

- Server-side observability

- Identity that persists across sessions

- Linkage across touchpoints

- Network-level coordination analysis

- Real-time intent modeling

When identity is defined by full-journey behavior rather than a single browser session, disabling JavaScript does not eliminate visibility. When detection is anchored in coordination and long-term objectives, emulating a browser is insufficient.

An entity must maintain coherence across time, accounts, and interactions. That’s much harder to fake.

A Strategic Shift

The agentic internet invalidates the reliance on JavaScript as a foundational detection layer. As autonomous systems become more capable and more common, they will:

- Emulate browsers convincingly

- Adapt to surface-level checks

- Treat detection systems as environments to optimize against

Execution-based signals will become table stakes, not differentiators. The strategic shift is to move detection away from what can be disabled and toward what persists.

JavaScript can still be additive, just not foundational.

In an internet increasingly shaped by autonomous, objective-driven systems, defenses that depend on cooperation from the client will degrade.

The systems that hold up will be the ones built around signals the adversary can’t turn off: persistent identity, behavior that accumulates across journeys, and coordination patterns that reveal intent over time.

Once autonomous systems are interacting with your business, executing a browser correctly stops being meaningful.

Understanding what that entity is trying to accomplish becomes the real detection problem.

--

Building Durable Defenses for the Agentic Internet

The next generation of defenses will rely on persistent identity, cross-journey visibility, and intent analysis. If you’re rethinking how detection should work in an agent-driven web, let's compare notes. Contact us here.

Ready to get started with Spec?

Nate Kharrl, CEO and co-founder at Spec, has built leading solutions for application security and fraud challenges since the early days of the cloud era. Drawing from his cyber experience at Akamai, ThreatMetrix, and eBay, Nate helped found Spec to focus on the needs of businesses operating in a landscape of increasing AI risks. Under Nate’s leadership, Spec grew from its mid-pandemic founding to raise $30M in venture-backed funding to build solutions used by Fortune 500 companies transacting billions in online commerce. Spec’s service offerings today include protective measures for websites and APIs that specialize in defending against attacks designed to bypass bot defenses and risk assessment platforms.