Financial Institutions Face Synthetic Automation

Financial institutions have always operated in a high-adversary environment, which has made banks, lenders, card issuers, fintechs, and payment platforms some of the most attractive targets for fraud on the internet.

As a defensive model, fraud teams focused on detecting stolen credentials, blocking scripted attacks, catching mule activity, tightening onboarding, and challenging suspicious transactions. Attackers certainly evolved, but many of their methods still produced visible operational signals. They moved too fast, repeated the same workflows, and left recognizable traces across devices, sessions, and requests.

That model is starting to break.

Financial institutions are now facing a different kind of pressure: synthetic automation. This is the use of AI-assisted and agentic systems to create, test, coordinate, and scale abuse across financial workflows in ways that look increasingly legitimate at the point of interaction.

Because financial services is one of the few industries where identity, access, authorization, compliance, and money movement all converge, when automation becomes more adaptive, the fraud problem becomes more structurally difficult to detect.

Synthetic automation changes how fraud shows up in financial systems and how synthetic identities are built and maintained. It changes how account takeover campaigns are staged and how social engineering is executed.

Fraud teams will have to measure more than isolated events if they want to see full attacks.

Why financial institutions are an early proving ground

Financial institutions are natural targets for agent-led abuse because the incentives are clear and immediate. There’s direct monetary value behind account creation, loan origination, wallet provisioning, card issuance, transaction approval, customer service intervention, and payment recovery. Every one of those workflows contains a decision point an attacker can influence.

That makes financial services especially vulnerable to systems that can pursue an objective over time.

In the older bot era, the central problem was scale. Attackers automated credential stuffing, card testing, application floods, or bonus abuse because speed created leverage.

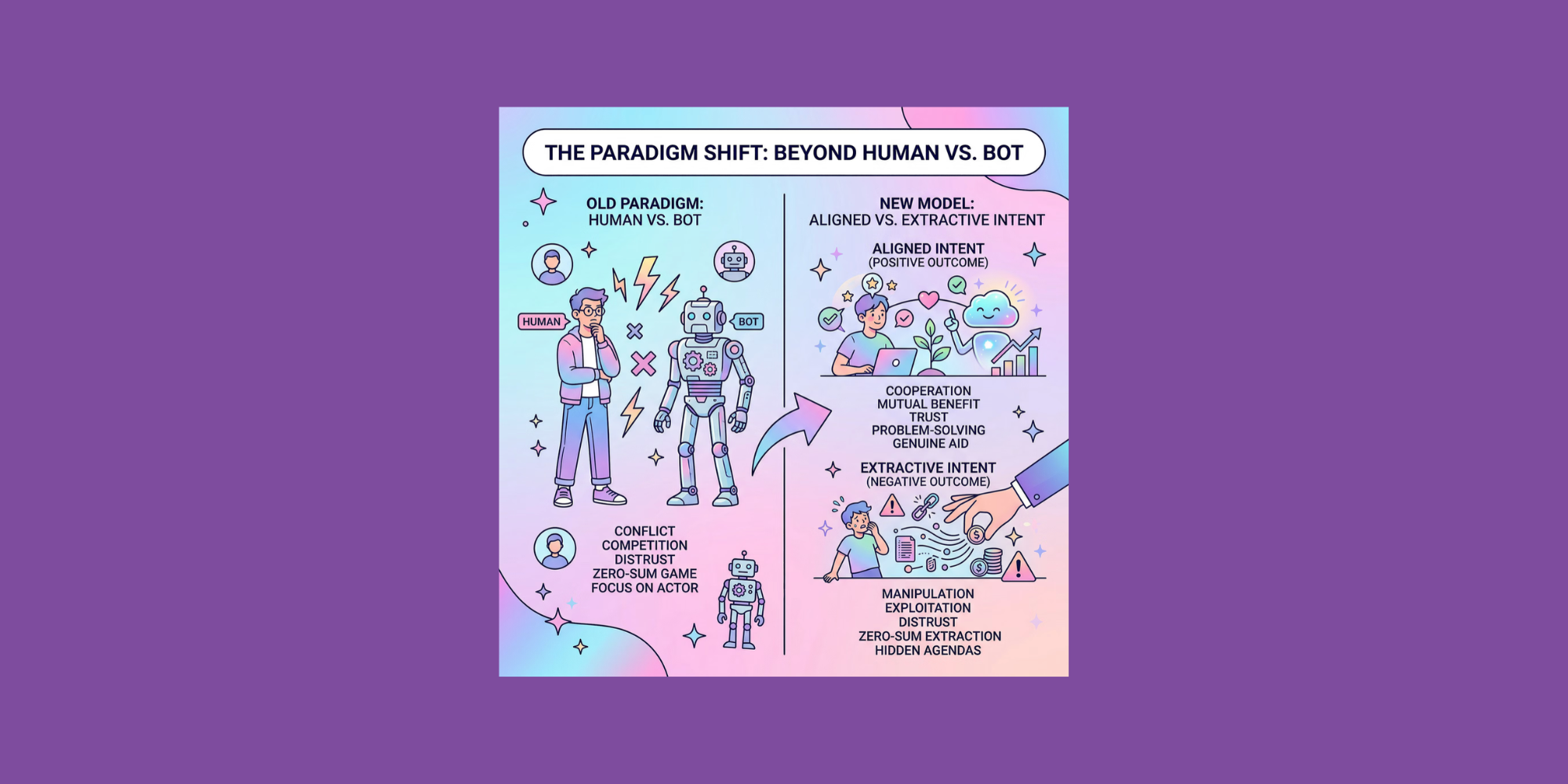

In the emerging agent era, the central problem is coordinated intent. Agents can evaluate responses, learn how a system works, slow down when necessary, vary behavior, and distribute activity across identities and sessions to avoid obvious detection. Automation is no longer defined only by mechanical repetition. It’s increasingly defined by objectives, persistence, and self-adjustment.

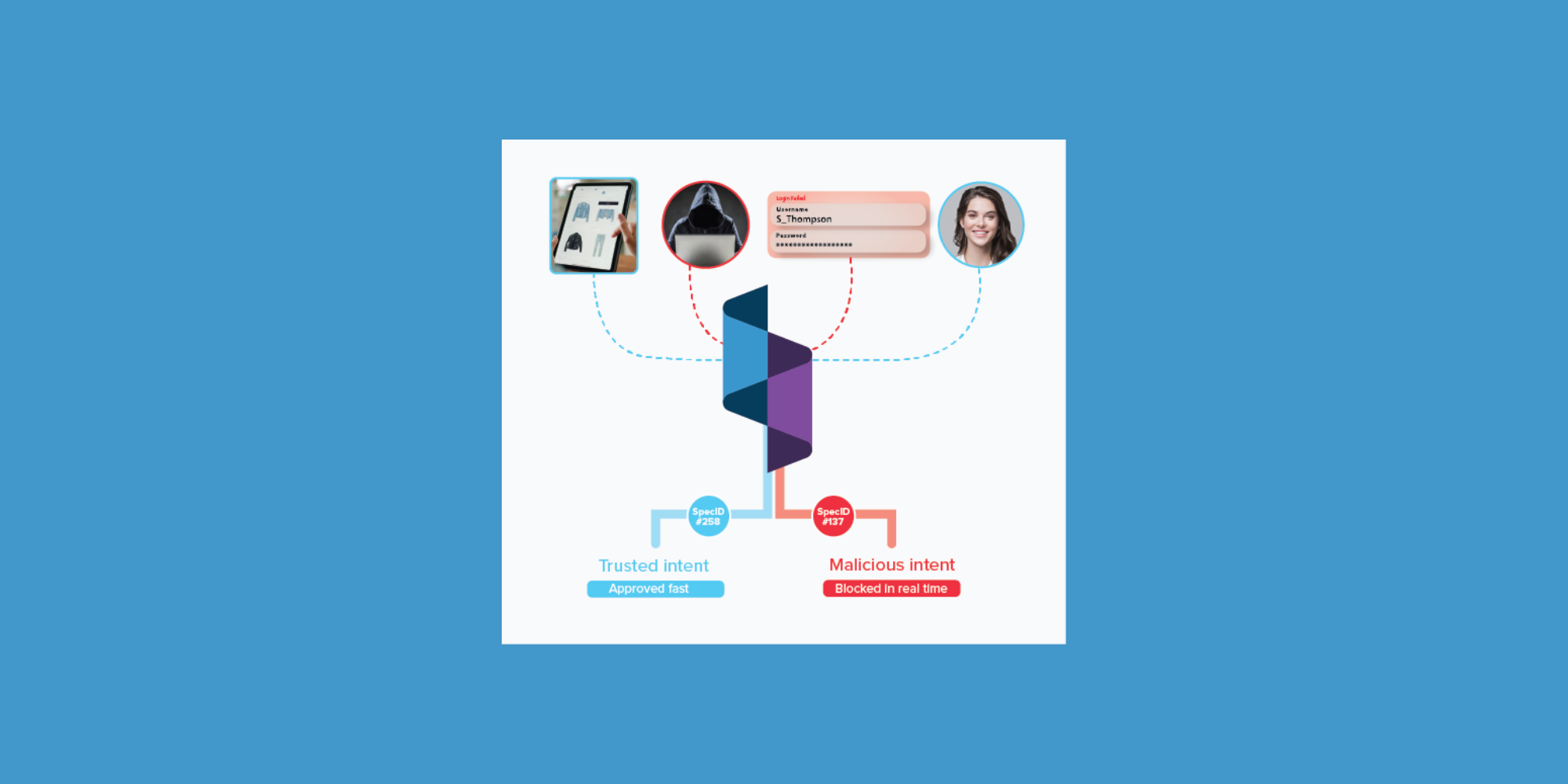

For financial institutions, that means the old distinction between normal customer behavior and automation becomes less useful. What matters more is whether an entity is trying to engage with the institution or extract value from it, and whether the institution can observe that intent across the full journey rather than at a single step.

This becomes especially clear in three areas where financial institutions are already under pressure: synthetic identity fraud, account takeover, and social engineering.

Synthetic identity fraud

Synthetic identity fraud has always depended on assembling enough believable information to pass onboarding and survive scrutiny. Traditionally, that meant combining real and fake personally identifiable information (PII), cultivating accounts slowly, and exploiting gaps in identity verification, credit building, or account monitoring.

What changes with synthetic automation is the efficiency and adaptability of the process.

FinCEN warned in November 2024 that criminals were using generative AI to alter or generate identity document images, including driver’s licenses and passports, and combining those images with stolen or fake PII to create synthetic identities. Bank Secrecy Act (BSA) reporting showed that malicious actors had successfully opened accounts using fraudulent identities suspected to have been produced with GenAI, then used those accounts to receive and launder proceeds from other fraud schemes, including check fraud, credit card fraud, authorized push payment fraud, and loan fraud.

Synthetic identity fraud is beyond a document or KYC problem. It’s becoming a system-level orchestration problem.

An agent can now help with each stage of the workflow. It can source or blend PII, generate document variants, test onboarding paths, observe which verifications fail, retry with modified inputs, and maintain a population of accounts over time. It doesn’t need to succeed immediately. It only needs to learn enough about the institution’s controls to improve its odds.

This is where many financial institutions remain vulnerable. They’re still built to evaluate whether an application or account opening event looks suspicious in the moment. But synthetic identity operations are rarely about a single event; they’re about cumulative credibility. The suspicious pattern often emerges only when the institution can connect identities, behaviors, devices, routes, and outcomes across a longer period of time.

That makes journey-level identity especially important. If identity is defined too narrowly at account opening, device, or transaction level, synthetic operations can stay fragmented. If identity is modeled across linked behaviors and persistent patterns, the institution has a better chance of seeing the network rather than the application.

A useful real-world example comes from a 2025 Department of Justice case involving a synthetic identity fraud scheme that targeted a victim bank with hundreds of fake identities. According to the DOJ, one conspirator hired a Russian actress to hold a photograph of a fake driver’s license to make a synthetic applicant appear real, and the scheme was carried out hundreds of times using hundreds of different synthetic identities. The specific facts are unusual, but the underlying lesson is not: synthetic fraud is operational, iterative, and designed to survive front-door controls.

The same pattern now shows up well beyond onboarding.

ATO is becoming more distributed and deceptive

Account takeover has long been one of the clearest examples of how fraud creates downstream losses and customer trust erosion at the same time. It hits both the institution and the account holder, and forces difficult tradeoffs between security and friction.

What’s changing now is how ATO campaigns are being executed.

In late 2025, the FBI warned that cybercriminals were impersonating financial institution staff or websites to steal money and information in ATO schemes. Since January 2025, the FBI’s IC3 had received more than 5,100 complaints reporting ATO fraud with losses exceeding $262 million. The bureau said these schemes often rely on social engineering through texts, calls, emails, and fraudulent websites impersonating legitimate financial institutions.

Separately, the Justice Department announced in December 2025 that it had seized infrastructure used in a bank ATO scheme involving fraudulent search engine ads and fake banking websites. DOJ said the fraud ring was responsible for more than $28 million in unauthorized bank transfers from U.S. victims and that the compromised infrastructure contained stolen credentials for thousands of victims.

Those cases show the modern shape of ATO. More often, it’s a coordinated sequence rather than a brute-force login attack. The attacker impersonates the institution, redirects the victim to a fake environment, captures credentials, and uses those credentials on the legitimate institution’s site. The attack spans channels and identities, using persuasion as much as automation.

Many bank defenses are still oriented around spotting anomalous login behavior or scoring a suspicious session. But the session is often the least interesting part of the attack.

The meaningful signal lies in the path that led there – which identities were targeted, what communications preceded the login, which recovery flows were tested, other accounts that behaved similarly, and devices or routes appearing across multiple victims. These actions reveal an objective rather than a moment.

A single session may look normal, but the broader attack does not. Agents distribute risk intentionally, spread activity across accounts and time, and keep each interaction within tolerable thresholds while the larger objective unfolds across the journey.

That same blurring of persuasion and technical abuse is now reshaping social engineering itself.

Automated social engineering

One of the biggest mistakes financial institutions can make is treating social engineering as separate from automation. That distinction no longer holds.

Deepfakes, voice cloning, AI-generated text, spoofed websites, and adaptive scripts are making social engineering more scalable and more believable. The human target still exists, but the machinery around the deception is becoming increasingly automated.

The Federal Trade Commission has warned that AI-generated deepfakes threaten to “turbocharge” impersonation fraud by enabling scammers to impersonate individuals with greater precision and at wider scale.

Social engineering is an identity integrity issue. If a fraudster can convincingly impersonate a customer, an employee, or even the institution itself, then authentication, authorization, support, and trust workflows all become targets.

This is especially dangerous in environments where teams still assume a verified channel is a trustworthy channel. A phone call can be cloned, video can be spoofed, documents can be generated. A support interaction can be guided by a model that adapts in real time to objections, questions, and verification prompts.

Institutions need controls that don’t rely too heavily on any single client-provided or user-presented signal.

That leads to the deeper weakness in many existing stacks.

Legacy controls are brittle

A lot of current financial fraud infrastructure still reflects the assumptions of the last era. It looks for suspicious bursts, repeated scripts, bad devices, impossible velocity, or obvious mismatch signals. Those controls still have value. But on their own, they’re increasingly brittle.

If a control depends on a signal the adversary can shape, suppress, or rehearse against, that control becomes easier to evade over time. This is especially true for client-side instrumentation and browser-level measurements.

In financial services, brittleness shows up beyond the browser as well. Knowledge-based verification can be socially engineered. Document checks can be gamed with synthetic media. One-time passcodes can be intercepted or manipulated through impersonation. Step-up verification can be passed if the fraudster has already shaped the context well enough. Support processes can be exploited if the fraudster knows how the institution handles urgent or emotionally charged cases.

Fraud teams often compensate for this fragility by tightening thresholds, but that typically shifts cost onto legitimate users in the form of more friction, false positives, manual review, and customer dissatisfaction.

That is particularly painful in industries that are highly sensitive to trust and convenience.

The deeper issue is intent

A model built only to identify automation will miss sophisticated abuse that behaves slowly and convincingly. Scoring transactions alone will miss preparation and coordination. Account-level identity misses the relationship between entities. Relying solely on fraud outcomes will intervene too late.

Intent analysis changes the center of gravity.

It pushes institutions to look for objective-seeking behavior across touchpoints and encourages them to connect onboarding, login, servicing, payments, and recovery events into a single investigative surface. It also helps distinguish between different types of non-human interaction, which matters in a world where not every agentic interaction is malicious.

For banks and fintechs, a visitor or account should not just be evaluated as “good” or “bad” based on a narrow event. It should be evaluated based on whether its behavior aligns with legitimate engagement or value extraction, and whether its path reveals coordinated, persistent, objective-driven abuse.

What financial institutions should do differently

The institutions best positioned for success will be the ones that stop treating fraud events as isolated points and start treating them as connected journeys.

That means building identity in a way that persists across time, channels, and workflows by linking applications, sessions, devices, recovery attempts, customer service interactions, document submissions, and payment behavior into something that reveals coordination. It means reducing overdependence on fragile front-end signals and increasing emphasis on server-side observability, behavioral linkage, and network-level patterning.

It also means changing some operational habits.

Fraud teams should assume that some synthetic identities will be cultivated patiently rather than pushed aggressively, and that social engineering and technical fraud will increasingly arrive as one blended attack. Attackers will optimize around business rules, not just technical weaknesses.

There is also a growing regulatory and supervisory case for this shift. FinCEN has found that compromised credentials had a disproportionate financial impact compared with other forms of identity exploitation. The Federal Reserve has likewise argued that banks and the official sector need better fraud data sharing to respond more quickly to GenAI-driven threats and make fraud harder and costlier to carry out.

Identity abuse, impersonation, and AI-enabled fraud are becoming more important, more costly, and harder to detect with legacy approaches.

Conclusion

Financial institutions are facing a present challenge that’s becoming more organized.

Synthetic automation is what happens when synthetic identity fraud, ATO, impersonation, and workflow abuse stop looking like isolated fraud tactics and start behaving like adaptive systems. Attackers can coordinate better, learn faster, and look more legitimate while they automate.

For banks, lenders, fintechs, and payment platforms, the next generation of fraud defense will depend on uncovering intent across the full customer journey.

Financial institutions are among the first places where it’s becoming impossible to ignore.

Ready to get started with Spec?

Nate Kharrl, CEO and co-founder at Spec, has built leading solutions for application security and fraud challenges since the early days of the cloud era. Drawing from his cyber experience at Akamai, ThreatMetrix, and eBay, Nate helped found Spec to focus on the needs of businesses operating in a landscape of increasing AI risks. Under Nate’s leadership, Spec grew from its mid-pandemic founding to raise $30M in venture-backed funding to build solutions used by Fortune 500 companies transacting billions in online commerce. Spec’s service offerings today include protective measures for websites and APIs that specialize in defending against attacks designed to bypass bot defenses and risk assessment platforms.